When I first began consulting as a technical SEO and digital operations strategist for civil engineering and materials testing laboratories across the UK, I discovered a profound disconnect. Laboratories were desperately trying to rank their services on Google by publishing thin, generic content about “quality control,” entirely missing the reality of what modern search engines—and more importantly, modern structural engineers—actually demand. Generative Engine Optimization (GEO) in 2026 relies on entity clustering, primary data, and undeniable, firsthand experience. To prove the value of deep technical accuracy, I conducted a forensic operational audit for a major London testing facility. What we uncovered wasn’t just an SEO content gap; it was a physical operational hemorrhage. The lab was losing lucrative contracts not because of poor marketing, but because their erratic compressive strength test results were triggering catastrophic project delays for their clients. The culprit? Mechanically degraded cast-iron testing equipment and a failure to adapt to low-carbon mix hydration kinetics.

This scenario perfectly illustrates the cost of unplanned downtime and mechanical degradation in construction materials testing. We rely on laboratory tests as the ultimate arbiter of structural safety. Yet, these tests are highly vulnerable to localized stress concentrations caused by out-of-tolerance equipment. A false negative does not simply result in a failed PDF report; it triggers a cascade of liability claims, structural recalculations, and in-situ destructive testing. The industry has historically spent millions over-designing cement mixes—wasting capital on unnecessary Ordinary Portland Cement (OPC)—solely to offset the statistical variance generated by imprecise laboratory procedures.

When dealing with modern supplementary cementitious materials (SCMs) and the stringent documentation demands of the 2026 UK Building Safety Regulator, transitioning to high-quality 100mm cube moulds has evolved from a laboratory preference into an operational necessity. As we navigate the complexities of civil engineering compliance today, the margin for error in materials testing has been legislated out of existence. This report dismantles the operational mechanics of concrete cube testing, analyzing the shift toward smaller specimen sizes, the exact impact of dimensional tolerances under BS EN 12390-1:2021, and the rigorous protocols required to eliminate human error from the laboratory floor while simultaneously building digital authority.

The Problem-Solution Pivot: Eliminating the False Negative

The fundamental pain point in materials testing is the discrepancy between concrete curing within a high-rise core and the concrete curing in a laboratory water tank. However, an even more insidious problem is the mechanical failure of the test specimen due to poor fabrication.

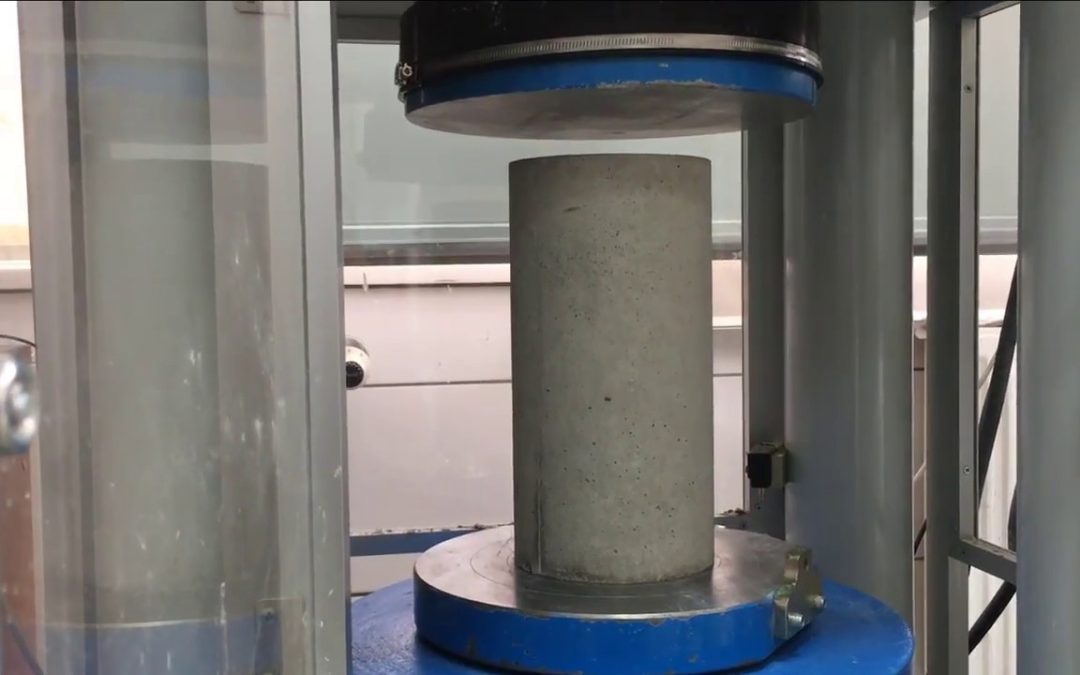

Compressive strength testing assumes that the load applied by the compression testing machine (CTM) is distributed perfectly evenly across the top and bottom faces of the cube. If a mould is warped, the resulting concrete cube will possess uneven, convex, or concave faces. When placed under the massive steel platens of the CTM, these microscopic high spots bear the entirety of the initial load. This creates massive localized shear stresses, inducing premature microcracking before the bulk of the concrete matrix is even engaged. The result is an artificially low ultimate failure load. Recent empirical studies demonstrate that utilizing degraded, low-quality moulds can lead to a compressive strength reduction of up to 18% compared to precision-calibrated equivalents.

For a beginner stepping into the world of materials testing or technical content creation, my greatest tip is to stop viewing equipment as static assets. A testing mould is a dynamic variable. Waiting for equipment to look “visibly worn” guarantees that dozens of batches have already been tested with compromised geometry. Laboratories must transition from reactive equipment replacement to proactive dimensional verification.

Quantifiable Benefits of Precision Testing

By upgrading to heavily reinforced, UKAS-calibrated cast-iron testing equipment—such as the high-quality 2-part models supplied by LabQuip Ltd—and instituting a strict metrological measurement regime, laboratories achieve distinct, quantifiable gains.

Data indicates that the financial cascade of a false negative is severe. An initial laboratory test may only cost a few hundred pounds, but a failure immediately triggers destructive in-situ verification. Diamond core drilling teams charge between £86 and £116 per 300mm hole, combined with daily labor rates exceeding £400. If structural integrity remains in question, the ensuing work stoppages and liability claims frequently push the total cost of a single bad break beyond the £50,000 threshold.

Financial Impact Breakdown of a False Negative Test

Beyond avoiding catastrophic losses, precision testing allows for the optimization of mix designs. When testing variance drops to near-zero, concrete producers no longer need to over-design their mixes with excess cement to ensure a safe statistical mean, cutting raw material costs significantly and lowering the overall embodied carbon of the project. Furthermore, from my perspective as an SEO strategist, laboratories that publish their rigorous, zero-variance testing methodologies build immense organic engagement. Search engines in 2026 reward entities that demonstrate unassailable technical expertise and transparent data structures.

The 2026 Regulatory Landscape: The Criminalization of Poor Testing

The regulatory environment surrounding construction materials in the UK has undergone a tectonic shift. Following the systemic failures exposed by the Grenfell Tower inquiry and the subsequent Independent Review of the Construction Product Testing Regime by Paul Morrell, the industry is operating under a fundamentally different legal framework.

As of January 8, 2026, the sweeping new regulatory framework enforced by the Office for Product Safety and Standards (OPSS) and the Building Safety Regulator (BSR) has taken full effect. Supported by a £43 million framework award to expand testing enforcement, the mandate is clear: testing and certification are no longer administrative tick-boxes; they are absolute commercial and legal imperatives.

Traceability and Documentation Constraints

Under the 2026 regulations, a compressive strength test report is worthless without an unbroken chain of custody. Regulatory oversight now demands exhaustive evidence-based assessment. If a structural component fails or requires an audit, investigators will look directly at the testing laboratory’s compliance trails.

Current documentation expectations dictate that laboratories and site engineers provide clearly marked sampling locations on active structural drawings, alongside photographic or digital timestamp records of the sampling and compaction process. Furthermore, laboratory test certificates must bear unique, indelible identification marks linking the sample to a specific calibrated testing unit.

A breach of these documentation standards, or the provision of misleading technical data due to poor equipment, is now classified as a criminal offence, exposing contractors and laboratory directors to severe sanctions. Utilizing substandard testing equipment that produces erratic results is now a direct pathway to regulatory prosecution.

The Operational Pivot: Volumetric Efficiency and Machine Capacity

For decades, the 150mm concrete cube has been the default standard in the United Kingdom and much of Europe. However, the landscape has rapidly shifted. According to BS EN 12390-1, concrete specimens must be at least three and a half times larger than the maximum aggregate size. Given that the vast majority of modern structural mixes utilize 20mm or 28mm aggregate, the smaller 100mm variant is not only fully compliant but vastly superior from an operational standpoint.

Ergonomic Realities and Curing Optimization

The arithmetic of material testing heavily favors the smaller dimension. A standard 150mm cube possesses a volume of 3,375,000 mm³ and weighs approximately 8.1 kg. Conversely, the modern 100mm standard has a volume of just 1,000,000 mm³ and weighs approximately 2.4 kg.

When a laboratory processes hundreds of samples per week, this 70% reduction in mass translates to massive ergonomic benefits for laboratory technicians. It severely reduces physical fatigue and the likelihood of workplace injury during the demoulding and curing tank transfer processes. Furthermore, it saves a tremendous amount of space in temperature-controlled curing tanks, which are highly energy-intensive to run at the mandated (20 ± 2) °C.

Machine Capacity and Ultra-High-Performance Mixes

A critical, often overlooked engineering advantage relates to the compression testing machine itself. As structural designs demand ultra-high-performance concrete (UHPC) with compressive strengths exceeding 100 MPa, standard 2000 kN compression machines physically struggle to crush 150mm cubes. Because the cross-sectional area of a 100mm specimen (10,000 mm²) is less than half that of the older standard (22,500 mm²), laboratories can successfully test significantly higher-grade concretes without needing to invest upwards of £30,000 in a new 3000 kN or 4000 kN hydraulic press.

Addressing the Conformity Factor Myth

There has historically been resistance from traditional structural engineers regarding the shift in size, driven by the assumption that the smaller volume exhibits higher statistical variability. However, exhaustive comparative studies contradict this. Research by the Public Works Central Laboratory, analyzing thousands of batches across various projects, demonstrated a highly predictable relationship. The mean compressive strength ratio of a 100mm specimen relative to a 150mm specimen is practically established at 1.05. The distribution of stress through the smaller cross-section is uniform enough to provide highly defensible data for compliance, provided the metrology of the equipment itself is flawless.

Dimensional Tolerances: Decoding BS EN 12390-1:2021

If the civil engineering industry is to rely on smaller cross-sectional volumes, the dimensional accuracy of the testing equipment must be absolute. The transition from the older BS EN 12390-1:2012 standard to the 2021 update brought refined scrutiny and tighter strictures to the calibration of concrete testing equipment.

Under BS EN 12390-1:2021, calibrated testing equipment must be manufactured from steel or cast iron, serving as the definitive reference materials. While high-impact ABS plastic or polystyrene models are excellent for single-use, high-volume environments to prevent cross-contamination, they do not possess the long-term rigidity required for repeated laboratory metrology without specific in-use performance test data demonstrating long-term equivalence. The European standard dictates ruthless tolerances that separate professional testing equipment from cheap, mass-market alternatives.

The Metrology of Calibration

To maintain UKAS (United Kingdom Accreditation Service) ISO/IEC 17025 accreditation, laboratories must subject their equipment to rigorous annual metrological checks.

-

Size and Height: The tolerance on the designated size (d) of the assembled unit is strictly 0.5%. For a 100mm unit, this allows a dimensional deviation of barely 0.5mm.

-

Flatness: The tolerance on the flatness of the four side faces is calculated at 0.0006d mm. For a 100mm unit, the internal flatness must not deviate by more than an astonishing 0.06 mm.

-

Perpendicularity: The angle between adjacent faces, and between the sides and the baseplate, must be completely square, with a maximum allowable deviation of exactly 0.5 mm.

The Mechanics of Verification

Laboratory managers cannot simply eyeball these metrics. Verifying flatness and perpendicularity requires a precise metrology toolkit: a Class 1 engineer’s square, a calibrated straight edge, and a set of master feeler gauges capable of measuring gaps down to 0.05 mm or 0.02 mm.

During a UKAS audit or routine maintenance check, the straight edge is placed across the internal faces, and the technician attempts to pass the 0.05mm feeler gauge beneath it. If the gauge passes, the equipment is warped. The resulting concrete face will be convex or concave, and the unit must be instantly red-tagged and removed from service. Attempting to save a few pounds by repeatedly using distorted testing equipment is a false economy that will inevitably trigger a catastrophic project dispute.

The Nuance of Low-Carbon Binders and SCMs

The global push toward sustainable construction has entirely rewritten modern mix designs. Traditional Ordinary Portland Cement (OPC) is being rapidly phased out in favor of mixes heavily dosed with Supplementary Cementitious Materials (SCMs) such as ground granulated blast-furnace slag (GGBS), pulverized fuel ash (fly ash), and modern calcined clays. We are even seeing the rise of Carbon Capture and Utilization (CCU), where firms infuse mineralised carbon dioxide directly into the concrete screed.

While these low-carbon mixes drastically reduce embodied CO2 emissions—sometimes by up to 80%—they introduce severe complications on the laboratory floor.

Altered Hydration Kinetics and Demoulding Mechanics

The primary engineering issue with SCMs—particularly GGBS and fly ash at high replacement levels of 50% or more—is the severe retardation of the early hydration process. The exothermic reaction is significantly slower. This means that at the 24-hour mark, the concrete is considerably greener, softer, and more fragile than a traditional OPC mix.

When a technician attempts to demould a low-carbon specimen at 24 hours, the risk of surface spalling or corner fracture is exceptionally high. If a corner chips during the demoulding process, the cross-sectional area is compromised, and the ultimate compressive strength reading will be fundamentally invalid.

This altered chemistry necessitates the use of highly specialized, chemically active release agents. Standard shuttering oils or cheap motor oils are no longer sufficient. Laboratories must utilize advanced demoulding oils (such as Q8 Dalton 500) that provide an outstanding wetting effect and prevent the fragile concrete cake from adhering to the cast iron. The oil must be applied thinly and evenly; pooling oil in the corners will displace the cement paste, creating rounded, weakened edges that will immediately fail the flatness requirements of the CTM platens.

Eradicating Human Error: The Standardized Casting Protocol

Even the most precisely machined, UKAS-calibrated cast-iron equipment is rendered entirely useless by an untrained or rushed technician. Human error during the sampling, compaction, and curing phases accounts for the vast majority of strength discrepancies and false negatives in civil engineering. Adhering blindly to outdated, ground-level habits is a recipe for compliance failure.

The Geometry of Compaction

Compaction is not a matter of brute force; it is a matter of geometric precision. According to standards like BS EN 12390-2, concrete poured into a 100mm unit must be deposited in specific volumetric layers. For a 100mm specimen, this typically requires the concrete to be poured and compacted in two distinct layers.

Each layer must be subjected to a minimum of 25 strokes using a standardized steel tamping rod (typically 16mm in diameter with a rounded end). The critical error technicians make is allowing the tamping rod to forcibly strike the steel baseplate when compacting the first layer, or penetrating too deeply into the first layer when compacting the second. This aggressive action causes immediate material segregation, driving the heavy coarse aggregate to the bottom and leaving a weak, watery layer of laitance at the top, which will crush immediately under load. After tamping, the external sides of the unit must be smartly tapped with a rubber mallet to release entrapped air pockets, ensuring maximum density without disturbing the aggregate matrix.

Environmental Variables: The Curing Imperative

Initial curing on the construction site is an area fraught with danger. Specimens must be shielded from moisture loss and extreme ambient temperatures for the first 16 to 24 hours before being transported to the laboratory. Leaving un-demoulded specimens exposed to direct summer sun or overnight frost permanently alters the microstructure of the cement matrix, ensuring the 28-day break will be a failure regardless of the actual mix quality.

Once in the laboratory, the final curing environment must be immaculate. The specimens are submerged in thermostatically controlled water tanks. These tanks must be maintained strictly at (20 ± 2) °C. Fluctuations in this temperature either artificially accelerate or retard the hydration process, rendering the final compressive strength result entirely unrepresentative of the concrete’s true structural potential.

Digital Authority and Technical Trust: The SEO Convergence

From my vantage point as a technical SEO expert, the meticulous attention to detail required in a concrete testing laboratory mirrors the exact requirements of Google’s evolving search algorithms. In 2026, creating content that ranks is no longer about keyword density; it is about establishing verifiable Expertise, Experience, Authoritativeness, and Trustworthiness (E-E-A-T).

When suppliers like LabQuip Ltd publish exhaustive, technically accurate data regarding BS EN 12390-1 tolerances, metrology, and hydration kinetics, they are not just educating the market—they are signaling profound authority to AI-driven search models. Search engines prioritize entities that provide deep, original insights and solve real-world engineering complications. By avoiding generic marketing fluff and focusing on the granular physics of materials testing, businesses establish themselves as the definitive, trustworthy source in their niche.

Concrete compressive strength testing in 2026 is an exercise in extreme precision. The transition toward smaller, 100mm volumetric cross-sections represents a vital evolution in laboratory efficiency, reducing manual handling risks and optimizing machine capacity without sacrificing statistical validity. However, this shift is only effective if backed by a rigorous adherence to European dimensional tolerances. In an era dominated by slower-curing low-carbon concrete and governed by the draconian oversight of new Building Safety Regulations, laboratories cannot afford to view testing equipment as static commodities. Calibrated equipment, regular feeler-gauge verification, engineered demoulding chemistry, and flawlessly trained technicians are the absolute minimum requirements for operational survival. The upfront cost of precision metrology is microscopic when compared to the devastating financial and legal fallout of a preventable failure.

10 Frequently Asked Questions (FAQs) About Concrete Cube Testing

FAQ: Why is the civil engineering industry moving from 150mm to 100mm concrete cube moulds? The shift is primarily driven by operational efficiency, ergonomics, and cost savings. A 100mm specimen requires roughly 70% less concrete by volume and weighs only 2.4 kg, compared to the 8.1 kg mass of a 150mm specimen. This significantly reduces technician fatigue, saves valuable space in temperature-controlled curing tanks, and allows standard 2000 kN testing machines to crush much higher-strength concrete mixes. Statistically, as long as the maximum aggregate size does not exceed 28mm, 100mm units provide highly accurate and reliable compressive strength data.

FAQ: What are the exact dimensional tolerances for a mould under BS EN 12390-1:2021? The standard mandates exceptional precision for calibrated metal testing equipment. The tolerance on the designated size (width, depth, height) is strictly 0.5%. The flatness of the internal side faces must not deviate by more than 0.0006d mm (meaning 0.06mm for a 100mm unit), and the perpendicularity (squareness) between adjacent sides and the base must be within 0.5mm. Failure to meet these tolerances will result in uneven load distribution during crushing, yielding false, low-strength results.

FAQ: How do laboratory technicians properly calibrate and verify testing equipment? Verification is achieved using specialized metrology equipment. A technician must use a vernier caliper to check the internal dimensions. To check flatness, a calibrated straight edge is placed across the internal faces, and a feeler gauge (typically 0.05mm or smaller) is used to try and find gaps beneath the straight edge. Perpendicularity is checked by placing a Class 1 engineer’s square in the corners and attempting to pass a 0.5mm feeler gauge between the square and the internal wall. If the gauges pass, the unit is out of tolerance.

FAQ: What is the real financial cost of a failed concrete compressive strength test? A single false negative generated by the laboratory can cost a contractor between £10,000 and £50,000+. While the initial lab test only costs a few hundred pounds, a failure triggers a catastrophic chain of events. It forces work stoppages, halts subsequent pours, and requires the mobilization of specialist diamond core drilling teams (costing £86-£116 per hole plus daily labor) to extract in-situ samples for verification. If the structural integrity is genuinely in doubt, it can lead to massive liability claims and the physical demolition of the structure.

FAQ: How does low-carbon concrete (GGBS / Fly Ash) affect the testing process? Modern sustainable concrete mixes replace traditional Portland cement with Supplementary Cementitious Materials (SCMs) like GGBS or fly ash. These materials possess slower hydration kinetics, meaning the concrete takes longer to set and develop early-age strength. For lab technicians, this makes demoulding at 24 hours highly precarious, as the concrete is fragile and prone to corner fractures. It requires meticulous handling and the application of high-quality, chemically active demoulding oils to prevent sticking.

FAQ: What are the most common human errors in concrete compressive testing? The majority of low strength results stem from poor fabrication rather than a bad mix design. Common errors include: incorrect sampling methods, using uncalibrated or dirty equipment, improper compaction (e.g., striking the baseplate with the tamping rod or failing to apply the required 25 strokes per layer), leaving the samples exposed to extreme temperatures on-site, and failing to maintain the curing tank water at the mandated (20 ± 2) °C.

FAQ: Why is the 2026 UK regulatory framework for construction products so important for laboratories? Following systemic industry failures, the Building Safety Regulator (BSR) and the Office for Product Safety and Standards (OPSS) implemented strict new frameworks taking effect in January 2026. Testing is now heavily regulated to prevent the use of substandard materials. Laboratories must provide absolute traceability, including photographic evidence, GPS-tagged sample locations, and unbroken chains of custody. Failing to provide accurate, compliant documentation is now a criminal offence.

FAQ: Do I need a specific type of demoulding oil for cast iron testing equipment? Yes. Using standard motor oil or cheap shuttering oil leads to surface staining, air-bubble entrapment (bug holes), and sticking, which can ruin the geometry of the concrete upon release. Laboratories should use specialized demoulding agents, such as Q8 Dalton 500, which offer a high wetting effect, protect the cast iron from rust, and create a chemical barrier that allows the concrete to release effortlessly without damaging the sharp edges required for accurate compression testing.

FAQ: Can I use plastic or polystyrene moulds instead of heavy cast iron? While heavy-duty ABS plastic or single-use polystyrene models are available and excellent for high-volume, single-use scenarios to prevent cross-contamination, BS EN 12390-1 explicitly identifies steel and cast iron as the primary reference materials for calibrated testing. If plastic models are used, the laboratory must possess in-use performance test data demonstrating long-term dimensional equivalence with cast iron. For rigorous UKAS-accredited laboratory work, heavy cast iron remains the gold standard for resisting distortion during compaction.

FAQ: What happens during the compression test if a concrete specimen has a convex or un-level face? If a specimen is cast in a warped unit, its faces will not be perfectly flat. When placed in the Compression Testing Machine (CTM), the rigid steel platens will contact the microscopic high spots of the concrete first, rather than distributing the load evenly across the entire 10,000 mm² surface. This creates massive point-loads that cause the concrete to shatter and shear prematurely, recording an ultimate strength that is drastically lower than the actual structural capacity of the concrete batch.

Recent Comments